Caching & CDNs with micro-frontends

Posted 15 April 2026 · 8 min read

Caching in a micro-frontend architecture is more nuanced than in a monolithic frontend. You have a shell, multiple remote manifests, and the chunks they reference, each with different deployment cadences and different tolerance for staleness. This post covers how we've approached it at Mintel, what's broken for us in the past and what we haven't fully solved yet.

Our stack

We run ~30 micro-frontends using Webpack Module Federation. The shell is a purely static Jamstack app deployed to S3, served via CloudFront, with Akamai in front of everything at the outermost layer. Remotes live at fixed, well-known URLs.

Deploy is a single rclone command that copies the built dist directory to a specific subdirectory in S3 per MFE. The shell and each remote are independent deployments, maintained by independent teams.

How we configure each asset type

Different assets have different caching requirements. Here's what we currently set and why.

index.html - no-cache

The shell's index.html bootstraps everything. If it's stale, everything downstream is potentially wrong. We set a Cache-Control: no-cache header on it via S3 object metadata, automated as part of the deploy.

no-cache doesn't mean the file won't be cached. It means the CDN or browser must revalidate with the origin before serving it. If the origin returns a 304, the cached copy is served, but if the content has changed, a fresh copy is returned.

remoteEntry.js - never cache

remoteEntry.js is the Module Federation manifest. It tells the shell where to find a remote's chunks. When you deploy a remote, this file changes but the filename doesn't.

A stale remoteEntry.js has two failure modes. The obvious one is errors: if it points at chunks from a previous deploy that have since been replaced, you'll get runtime failures. The subtler one is that users won't see the new version of the app until they get a fresh manifest. This leads to a remote team shipping a fix or a feature, but users continue running the old code because the manifest is stale.

At the Akamai layer we set Cache-Control: no-store, max-age=0 on remoteEntry.js, which prevents it being cached by the browser. We also use dynamic remote loading via the module-federation-import-remote package, which appends a cache-busting query param to the remoteEntry.js URL by default. Since our CloudFront distribution includes query strings in the cache key, this ensures that even if the file is cached at CloudFront, each request gets a unique URL that bypasses the cache and fetches the latest version from S3.

Chunks - no explicit headers

The JS chunks that remoteEntry.js references are content-addressed via Webpack's contenthash substitution. When the content of a file changes, the hash changes, and so does the filename. That means you can cache chunks aggressively - a new deploy produces new filenames, so the CDN treats them as new assets automatically.

Configuring this in your output.filename is straightforward:

output: {

filename: '[name].[contenthash].js',

}We've had cases where teams forgot to configure contenthash for their remote's asset filenames. The chunks deployed with predictable names, got cached, and subsequent deploys weren't reflected for users until the cache TTL expired naturally.

We don't currently set explicit Cache-Control headers on chunks. CloudFront then caches these files based on a heuristic TTL derived from the Last-Modified or ETag headers from S3. At the Akamai layer we set no-store behaviour on origin responses, so all caching happens either within the browser or at CloudFront.

404 handling

Our shell is a single-page app. Routing is client-side. If a user navigates directly to a route or refreshes, the CDN looks for a file at that path in S3, finds nothing, and by default returns a 404.

The fix is configuring CloudFront to serve index.html for 4xx responses from the origin. AWS documents this as custom error responses in the CloudFront distribution settings.

Akamai proxies any request that doesn't match a known API or MPA route through to CloudFront, so this fallback behaviour is handled at the CloudFront layer and applies to all MFE routes.

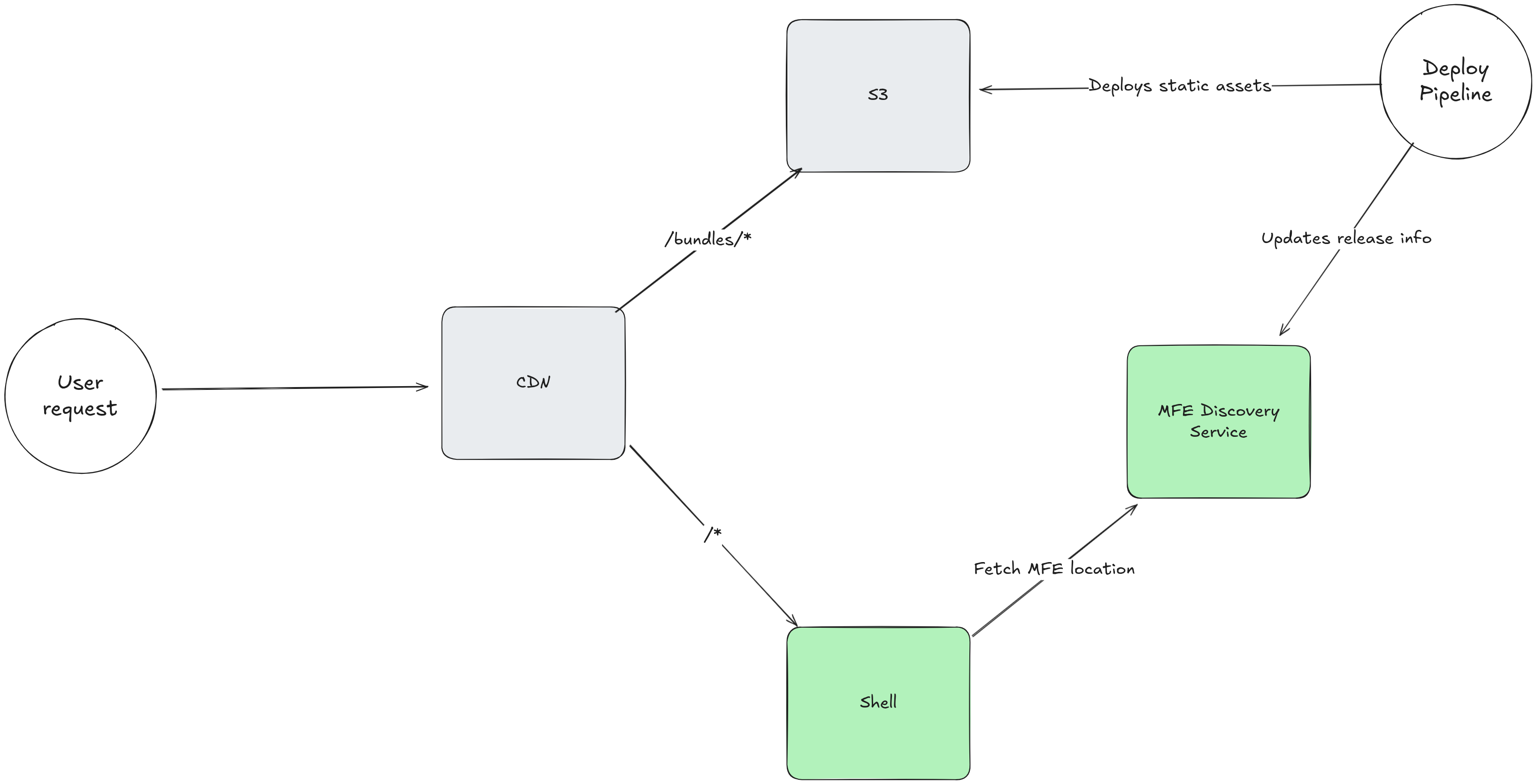

User request flow

Remotes are loaded lazily, wrapped in React.lazy and dynamic imports. The shell only fetches a remote's remoteEntry.js when the user navigates somewhere that needs it.

CloudFront/S3 outage

The setup above evolved over time, partly in response to incidents. In January 2025, an AWS issue meant that our CloudFront origin started failing to fetch content from S3, resulting in 404 NoSuchBucket errors. Because CloudFront is configured to serve index.html for 4xx responses from S3, the standard SPA catch-all setup, those errors were converted to 200 responses before reaching Akamai. Akamai had no way to know anything was wrong and cached them normally. When AWS recovered, users were still being served those cached bad responses from both Akamai's edge and their own browser caches.

Purging in Akamai is slow and painful. You can't glob a path and clear everything matching a pattern, you need specific URLs. With dozens of MFEs and hundreds of JS chunk files, that's not a practical option under pressure. We ended up in a war room, scrambling through purge requests that sat loading, watching caches bust gradually over the course of a few hours as TTLs expired naturally. This also wouldn't help users with bad responses cached in their browsers.

The escape hatch we landed on was changing the contenthash length in the Webpack config across key MFEs, then redeploying. Changing the contenthash length changes all the generated filenames, which forced the CDN to treat them as new assets rather than serving cached bad responses. It worked, but we arrived at it under pressure, it wasn't a documented runbook step.

Since then, we disabled Akamai caching for MFE assets and added multi-region failover for the S3 bucket to reduce the risk of being in the same position again. We also started explicitly setting no-cache on index.html to ensure changes are picked up quickly and any erroneous fallback responses aren't cached for long.

The honest answer to "what's the plan if we get bad responses cached" is still: we don't have a clean solution. The contenthash length trick remains our nuclear option for forcing new filenames across the board when we need to invalidate everything in a hurry.

Improving our caching strategy

Writing this post has been a useful exercise in reflecting on how our current caching strategy works. Caching config isn't something you revisit often when the system is working, and the current setup has held up well enough in practice.

However our cache configuration is split between S3 object metadata for index.html and Akamai rules for remoteEntry.js, and other assets have no explicit cache headers at all. There's no single place to look to understand the full caching policy. We also don't cache at the outer Akamai layer at all, as a strong response to the pain of our previous incident, but this is hurting performance and increasing bandwidth costs.

The cleaner approach is to set explicit Cache-Control headers at the origin via S3 object metadata for every asset type, and treat the CDN layers as caches that respect origin headers rather than places where caching policy is defined. That also means if we ever swap out or reconfigure the Akamai layer, the caching behaviour follows from the origin rather than being silently lost.

We aren't setting cache headers for chunks at all, so we're relying on CDN and browser heuristics to determine how long to cache them. Setting explicit Cache-Control: max-age=31536000, immutable headers on content-hashed chunks and re-enabling Akamai respect origin cache behaviour would be a good improvement, ensuring they're cached aggressively and correctly as immutable assets - but with our current set up there's no guarantee that every team has correctly configured their build output filenames to use contenthash. There is, however, a different approach that would solve both problems at once, but it requires a more significant architectural change.

The alternative: versioned URLs and a discovery service

Everything above assumes remotes live at fixed, well-known URLs. That's the simplest deploy model, but it's also the root cause of why remoteEntry.js caching is hard, because when you're mutating a file in place you can never safely cache it for long.

The more robust approach is to include versioning in the URL itself:

https://cdn.example.com/remote-a/v1.4.2/remoteEntry.js

With versioned paths, remoteEntry.js becomes a content-addressed file like any other chunk. You can cache it with max-age=31536000, immutable. Old versions stay in S3 indefinitely, so users mid-session aren't broken by a deploy. Rollback is pointing the manifest at a previous version rather than a redeploy.

To make this work, the shell can't hardcode remote URLs. You need a discovery service - something the shell calls at boot time to get the current URL for each remote:

{

"remote-a": "https://cdn.example.com/remote-a/v1.4.2/remoteEntry.js",

"remote-b": "https://cdn.example.com/remote-b/v2.1.0/remoteEntry.js"

}

However we don't do this, even though we identified this pattern and added it to our architectural blueprint as a possible future direction over a year ago. It would catch teams missing contenthash configuration, but that's a discipline problem, not a reason to migrate 30 MFEs. More aggressive caching at each CDN layer would also help performance and reduce bandwidth costs, although it's challenging to quantify the impact of that without a detailed analysis of cache hit rates and bandwidth costs, and we could achieve a similar effect by setting immutable headers for chunks with our current approach.

Having a discovery service would also enable more complex deployment patterns like canary releases or feature flags at the deployment level, but this all adds complexity and operational overhead. Most cases we're able to feature flag within the application logic itself, making it easier to reason about the code our users are running. Canary releases also requires a time investment in automated monitoring and alerting strategies to be truly useful.

While a discovery service remains a potential future option, our more immediate actions are focused on improving our current caching strategy within our simpler deployment approach.